AI adoption is happening faster than governance.

Developers are already using AI tools like ChatGPT, Claude, Copilot, Cursor, and internal assistants. But companies often have no visibility or control over what data is being sent to those models.

Security teams are often forced to choose between blocking AI entirely or accepting unknown risk. The AI Gateway provides a third option: controlled adoption with policy enforcement.

Common risks

- Source code pasted into external AI tools

- Secrets accidentally included in prompts

- Customer data sent to external providers

- No audit trail for AI usage

Control AI requests without changing the developer workflow.

Posturio AI Gateway lets developers and small teams inspect prompts, apply policy, approve models, and route requests without rebuilding controls in every internal tool.

MCP tool audit ships in the same console — this hosted demo focuses on the LLM path; ask for a 15-minute walkthrough to see MCP server approval, per-key tool scope, and redacted tool traces alongside LLM calls.

- OpenAI-compatible request flow developers can test immediately

- Prompt inspection, blocking, redaction, and routing policies

- Structured request metadata for review, cost visibility, and audit workflows

- Managed MCP tool access with curated catalogs and per-key scope

- Self-hosted or Posturio-hosted deployment options when you are ready to move beyond the demo

What you can verify in this demo

What an AI gateway does

An AI gateway sits between internal applications and model providers. It centralizes prompt inspection, policy enforcement, approved-model access, routing, managed MCP tool access, and request logging so teams can control AI behavior without duplicating guardrails in every application.

- Inspect prompts before data leaves internal systems

- Route requests by policy, workload, or data sensitivity

- Restrict access to approved providers and models

- Curate hosted MCP tools and expose them only to approved keys

- Return structured metadata for review, cost, and audit workflows

Secure AI gateway, model gateway, and LLM gateway usually describe the same control layer

Buyers often search for a secure AI gateway, AI gateway platform, LLM gateway, or model gateway. In practice, they are usually looking for the same architecture decision: one reviewable layer that handles prompt inspection, approved-model access, routing, and audit data before requests reach external or local models.

- Secure AI gateway: emphasizes prompt inspection, policy enforcement, and data controls.

- LLM gateway: emphasizes model routing, provider policy, and shared request handling.

- Model gateway: emphasizes approved-model access and the layer between apps and providers.

- AI gateway platform: emphasizes shared policy, operator review, and deployment controls.

Use the MCP cluster when tool access needs its own evaluation path

MCP gateway

Start here when the real question is how Model Context Protocol access should be controlled in production, not only whether MCP is supported.

Governed MCP tools

Review curated server catalogs, org approval, per-key tool scope, prompt gating, and redacted traces for tool-backed requests.

MCP security

Use the security page when buyers need to separate raw protocol support from production approval, blocked execution, and operator review.

MCP registry governance

See how registry discovery, internal catalogs, and production enablement should stay separate as MCP adoption grows.

MCP gateway alternatives

Compare direct tool integrations, narrower gateway layers, and a production MCP control path without collapsing the decision into a checkbox list.

MCP tool access control

Go deeper on org-level approval, narrower key scope, and the day-two workflow required once MCP tools are shared across teams.

Choose the operating model that fits your team

Deployment options

- Self-hosted in your enterprise cloud or on-prem environment

- Prompts and responses stay under your internal controls when self-hosted

- Posturio sells software, implementation, and support services

- Posturio-hosted option for teams that want a managed deployment

One AI gateway for policy and routing

Use the same API contract across provider choices. The gateway inspects each request, enforces policy, and returns metadata so engineering and security teams can review usage consistently.

- OpenAI-compatible request and response patterns

- Secret detection, redaction, and policy blocking

- Provider/model routing by policy or prompt type

- Structured metadata for audit workflows

What the AI gateway does

Secret detection

Explain this code: AWS_SECRET_ACCESS_KEY=AKIA...

Result: Prompt blocked before upstream call

Sensitive data routing

Customer SSN: 123-45-6789 Summarize this information

Result: PII detected, routed to local model

Model routing

Refactor this Python function

Result: Code prompt routed to Claude

Move from category research into product pages and shortlist pages

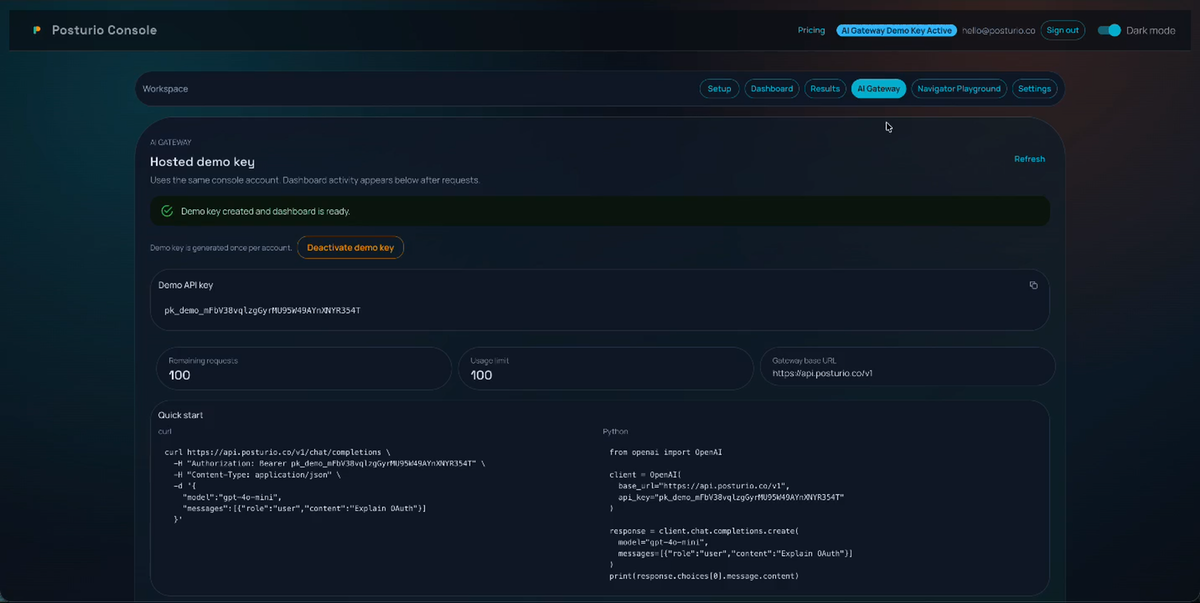

Run a request in the browser

- 1. Create demo access

- 2. Receive a live demo key

- 3. Review policy, routing, and request metadata, then continue to the console if needed

Demo key is ready. Review policy, routing, and request metadata here, then open the Posturio console.

curl

curl https://api.posturio.co/v1/chat/completions \

-H "Authorization: Bearer USER_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model":"gpt-4o-mini",

"messages":[{"role":"user","content":"Explain OAuth"}]

}'

Python

from openai import OpenAI client = OpenAI( base_url="https://api.posturio.co/v1", api_key="USER_API_KEY" )

Browser playground

Assistant response

Run a request to see the gateway response.

Gateway metadata

Raw JSON

{}

Continue into the platform

Hosted demo on this page

Stay in the browser playground when you want to validate policy behavior, prompt blocking, and model routing in the browser.

AI Gateway console

Continue into AI Gateway admin to manage platform policies, API keys, request review, and investigations in one place.

Navigator in the same console

Continue into Navigator to search private knowledge, manage sources, and resume grounded conversations on the same platform account.

Review the product before deployment

Live demo

Send a real request through AI Gateway on this page, then inspect the response metadata and quota behavior immediately.

Platform walkthrough

Watch the demo issue a key, block a prompt, route requests, and hand off into the shared platform flow.

Console view

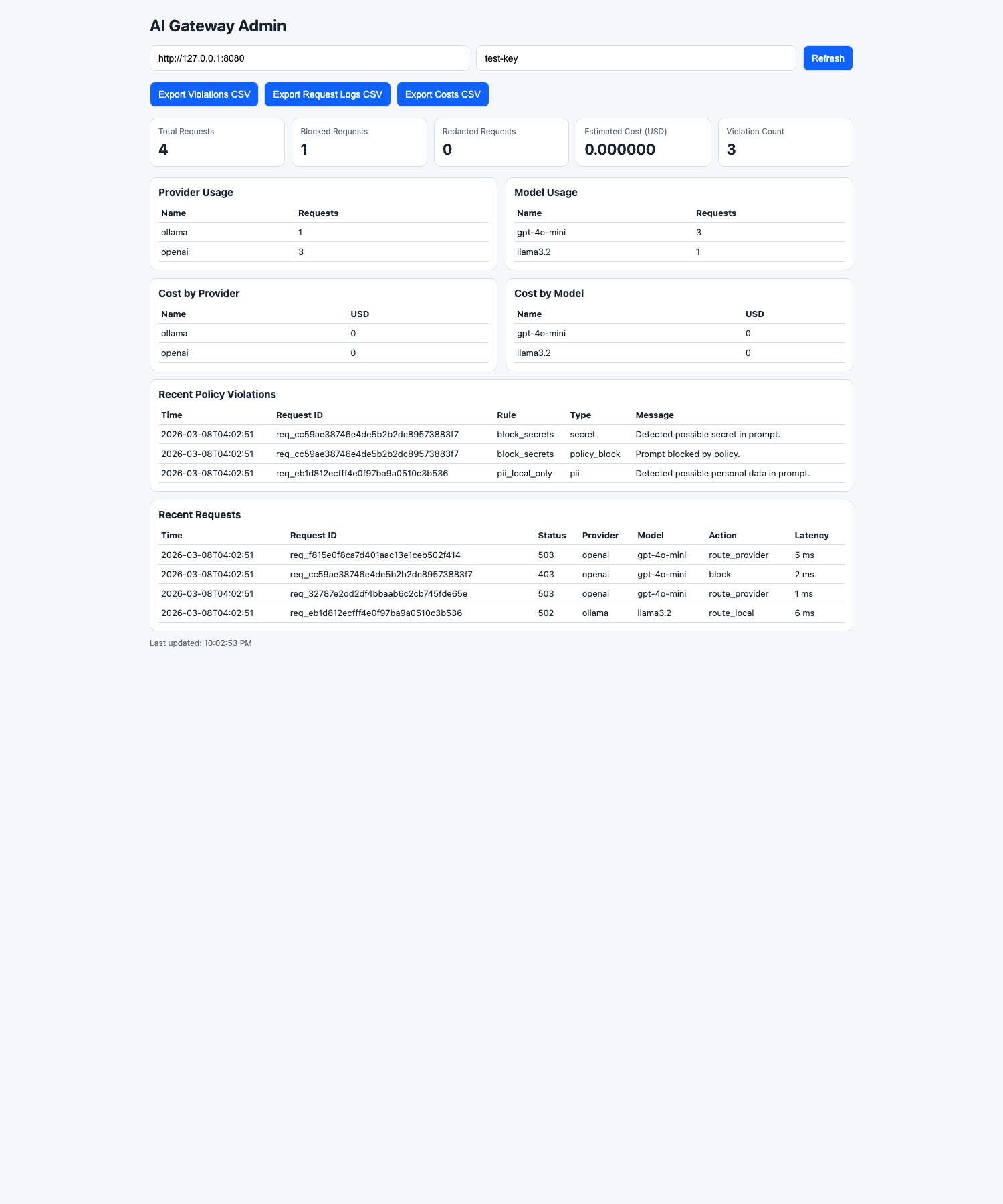

Review the current gateway dashboard with request volume, blocked prompts, provider usage, and estimated cost trends from the MVP console.

Visibility for engineering and security teams

The gateway provides an audit trail for AI usage across engineering tools and internal applications.

Example daily metrics

Gateway Dashboard

Live admin view from the current MVP showing request volume, blocked prompts, provider/model usage, and estimated cost trends.

Product walkthrough

Walk through the hosted gateway flow: signup, demo key issuance, blocked prompts, routed requests, and metadata in responses.

Create a demo session, get a live key, and send a request.

Create evaluation access here and you will receive a live `pk_demo_*` key immediately so you can test an allowed request, a blocked prompt, and routing behavior before opening the console.

Returning user? Sign in below and your existing demo key will be restored into the quick-start and browser playground panels above without losing the current evaluation flow.

Demo access includes request caps and abuse protection. Use Contact Us when you need more volume or a production deployment path.

Start the demo

Create or restore access here. Use the demo to inspect policy, routing, and request metadata before opening the console.

Hosted demo API key

pk_demo_...

AI Gateway and Navigator are two areas of the same Posturio platform. Start in the demo, then open the shared console when you need policy review or grounded search.

Need help with architecture, deployment, or procurement?

The self-serve demo is the best way to evaluate the gateway. For deployment planning, use our Contact Us page so requests are handled in one place.

Contact us

Reach us via the Contact Us page for deployment support and customer success coordination.